NeuroDetect AI

Brain tumors represent a significant global health concern, where precise and timely diagnosis is crucial for effective treatment and patient prognosis. Current reliance on manual interpretation of Magnetic Resonance Imaging (MRI) can be time-intensive, subject to inter-observer variability, and susceptible to diagnostic errors. This is particularly evident in differentiating various tumor types and in assessing glioma malignancy, the latter often necessitating invasive biopsy procedures which carry inherent risks.

This project, NeuroDetect, addresses these diagnostic challenges by developing and evaluating a sophisticated dual-component computational system.

The first component targets multi-class brain tumor characterization (Glioma, Meningioma, Pituitary, No Tumor). It employs an ensemble approach, integrating features from an established convolutional network (ResNet50) with a bespoke network architecture enhanced by refined feature selection mechanisms, achieving approximately 98.55% accuracy on a public Kaggle MRI dataset.

The second component focuses on non-invasive glioma grade differentiation (Low-Grade vs. High-Grade). A novel hybrid computational architecture was developed, which synergistically combines information extracted from two distinct pre-trained image analysis backbones (ResNetV2 and EfficientNetB0). This is further augmented by a targeted feature-weighting mechanism applied after information fusion from 2D axial FLAIR and T1ce MRI slices (BraTS 2019 dataset), yielding approximately 91.86% accuracy and 0.83 AUC.

NeuroDetect is prototyped as a user-friendly mobile application, demonstrating the potential for advanced computational tools to provide accessible, accurate, and rapid decision support for clinicians, with the aim of improving diagnostic pathways and patient care.

PLANT-EASE

A deep learning-based web application that detects diseases in cinnamon plants and provides visual explanations using Explainable AI (XAI) techniques. A custom CNN model is trained on a cinnamon plant image dataset to classify diseases such as leaf spot, rough bark, and stripe canker. The system integrates Grad-CAM++ and HiRes-CAM to highlight the regions in the image that influenced the prediction, helping farmers and agricultural experts understand and trust the AI’s decisions.

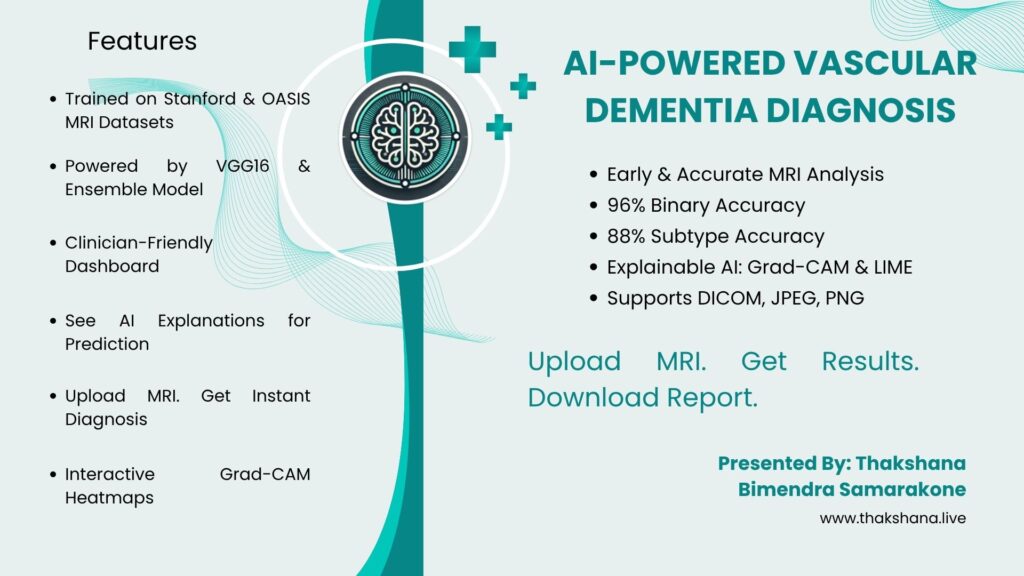

Neuro-Find: AI-Enhanced Early Detection of Cognitive Impairment using MRI

Vascular dementia (VaD) presents significant diagnostic challenges due to its heterogeneous clinical manifestations and the overlap of symptoms with other neurological disorders. Traditional diagnostic approaches rely heavily on expert interpretation of MRI scans, a process that is often time-consuming, subjective, and susceptible to inter-observer variability. To address these limitations, an AI-powered web application has been developed to facilitate rapid, accurate, and interpretable diagnosis of vascular dementia using brain MRI data.

This system employs advanced deep learning architectures, including VGG16 and DenseNet121, to perform both binary classification (distinguishing VaD-demented from non-demented cases) and multi-class subclassification into four VaD subtypes: Binswanger, Hemorrhagic, Strategic, and Subcortical dementia. The prototype demonstrated a testing accuracy of 96% for binary classification and 88.25% for subtype analysis. A distinctive feature of the system is the integration of explainable AI techniques, such as Grad-CAM and LIME, which provide visual and textual explanations to support clinical decision-making and foster trust in AI-driven outcomes.

The web-based interface supports DICOM, JPEG, and PNG formats, enabling clinicians to efficiently upload MRI scans, receive diagnostic predictions, and download comprehensive reports. By combining high diagnostic performance with transparency and user accessibility, this prototype aims to bridge the gap between artificial intelligence research and clinical practice, offering healthcare professionals a valuable tool for timely and reliable vascular dementia diagnosis and management

Microeconomic Level Household Income Sufficiency Predictor Using a Novel Hybrid Deep Learning Approach with XAI

This project presents a comprehensive analysis of the microeconomic-level household income sufficiency indicator through the application of advanced machine learning techniques. The core objective is to develop an intelligent system capable of accurately predicting household expenditures and assessing income sufficiency at a microeconomic scale. To achieve this, a N-tiered modeling approach is employed.

The primary model is a novel hybrid deep learning architecture designed specifically for predicting household expenditures. This model integrates the strengths of both a convolutional neural network, a multi-layer perceptron, and XGBoost, thereby enhancing the accuracy and robustness of expenditure predictions.

Complementing this, a secondary model is implemented to predict the household income sufficiency indicator. This model not only processes household input data but also integrates Explainable Artificial Intelligence (XAI) techniques. The inclusion of XAI enhances the transparency, interpretability, explainability, and trustworthiness of the model’s predictions, enabling stakeholders to understand the reasoning behind the sufficiency assessments. Such interpretability and explainability are essential for householders who require clear and actionable insights for effective decision-making.

Together, the dual-model framework provides a practical and scalable solution for understanding and addressing income adequacy at the household level, significantly contributing to socio-economic planning and efforts to reduce household income insufficiency through data-driven intelligence and explainable outcomes.

LLM based Automatic Speech Recognition for Medical Documentation

This project is dedicated on leveraging Automatic Speech Recognition (ASR) within the medical domain, which emphasizes on refining and enhancing the accuracy of the transcription through Large Language Model (LLM) based approach.

The major challenge discussed is the difficulty of manual documentation which is time consuming and laborious. ASR meets the challenge of transcribing medical conversations, but still struggles to understand the intricacies in patient-doctor consultations. These problems arise mostly because there are complexities in medical language, nuanced phrases, detailed medical terms and people speaking with different accents. Poor performance with special vocabulary and frequent transcription errors are usual for general ASR models in these domain-specific information systems. Different accents can further disrupt word understanding which adds more challenges to transcription. This issue is very serious because inaccurate information from transcription may affect how patients’ treatment and diagnosis. An illustrative example of this problem is that “”Cystic fibrosis”” being misinterpreted as “”65 Roses””.

This work aims to analyze interconnections between context and ASR results related to medical terms and accents which will help to fix parts of current technology and thereby enhance accuracy in ASR. The approach improves the problem area by creating a medical ASR system that considers the context and adapts to the accent used by both patients and healthcare providers.

For its first ASR component, the developed system recorded a Word Error Rate (WER) of 12%. Following this, a Large Language Model (LLM) helped to correct the errors made by speech recognition. This new method with LLMs makes it easier to understand sentences more completely. It depends on deep learning methods, especially neural networks and contextual understanding, for speech recognition in the medical domain.

The outcome of this project is anticipated to serve on optimally deploying ASR in healthcare settings. This research addresses the critical need for domain-specific ASR system which is adaptable to diverse accent and is contextually aware regarding the medical terminology. As a result, this contributes to improve the overall patient satisfaction and the productivity of medical documentation within clinical settings.

CliniGuide – Foundational Framework for Medication Recommendation based on Reasoning

CliniGuide addresses the growing need for precise, context-aware medication recommendations in healthcare by leveraging large language models (LLMs) enhanced with Knowledge Distillation (KD) and Retrieval-Augmented Generation (RAG). Unlike generic AI systems that often lack detail and transparency, CliniGuide focuses on delivering explicit, personalized medication suggestions including dosage and medication details while ensuring interpretability aligned with clinical best practices.

The system follows a two-phase framework. First, a teacher LLM generates distilled data to fine-tune a smaller, more efficient student model. This KD process preserves critical medical knowledge while optimizing performance and reducing computational demands. In the second phase, RAG is employed: the system retrieves domain-specific information from a vector store based on user input and combines it with the student model’s reasoning using chain-of-thought prompt engineering. This ensures that recommendations are not only contextually relevant but also transparent and explainable.

Preliminary evaluations demonstrate improved clarity and accuracy in medication suggestions. Metrics such as BLEU (0.0196), ROUGE-1 (0.2674), ROUGE-2 (0.0519), and ROUGE-L (0.1348) indicate that CliniGuide effectively captures key concepts and maintains relevance, with room for refinement in textual precision. Overall, CliniGuide represents a significant step toward AI-driven, patient-specific medication recommendation systems that are trustworthy, actionable, and clinically aligned.

An Explainable Few-shot Early Defect Detection System for Production Lines

This research presents an explainable system for detecting defects in production lines using only a few sample images. It combines advanced machine learning techniques with visual explanations to quickly identify defects, helping manufacturers improve quality control with minimal training data and greater transparency in decision-making.

Health Sentinel

“Health Sentinel” is a digital platform designed to modernize and streamline the reporting and monitoring of notifiable diseases in Sri Lanka, mitigating the inefficiencies and delays in the current manual reporting system. “Health Sentinel” enables healthcare professionals to report cases in real-time, monitor diseases and take timely actions to prevent the spread of diseases. With this system in place, reporting of cases will be instant, unlike the current process, which can take up to two weeks and in some instances, cases do not get reported at all.

The system accommodates all key stakeholders in the process: doctors, hospitals, medical officers of health (MOH), public health inspectors (PHI) and the epidemiology unit allowing each of them to perform their respective responsibilities through a centralized and role-based platform. Features like real time notifications, interactive dashboards and map-based visualization enhance the operational efficiency. The system also accommodates the public and enables them to view the confirmed cases and public-related events hosted in their area.

This streamlined workflow not only improves the speed and accuracy of notifiable disease reporting but also strengths early detection, response coordination and public health decision making across the country.

CSS Code Smell Detector with Machine Learning for VS Code

Cascading Style Sheets (CSS) is one of the most essential tools in styling front-end web

development. In a web application, HTML code provides the structural foundation whereas CSS

provides the visual appearance, like font style, colors, layout, spacing, and other elements. As

web applications continue to grow in complexity with time, maintaining high-quality, efficiency,

and clean code throughout the document has become increasingly challenging. As a result of this

problem a larger quantity of developers are increasingly moving into CSS preprocessors or web

frameworks. However, with or without knowledge of CSS, using these tools can unintentionally

lead to code smells – patterns or practices found in the CSS code that are not necessarily

incorrect but can hinder the maintainability, scalability, performance, and readability of the code.

There are several existing tools like CSS Lint, W3C CSS Validator and CSS nose that address

the quality and validation of the CSS code. However, these tools don’t incorporate machine

learning and do not provide real time feedback.

This paper investigates the CSS code smells that take place and explores how these code smells

can be reduced by using machine learning. Given that Visual Studio (VS) code is one of the

major platforms for developers to use CSS code, this research focuses on incorporate this

concept as a plugin to this IDE. The proposed plugin will aim to provide real-time feedback,

actionable insights, and enabling developers to write more cleaner, optimized and more

maintainable CSS.

Sentiment Analysis and Categorization of Mobile App User Reviews

This project aims to develop an automated system for extracting meaningful insights from mobile app user feedback. It addresses the challenges of analyzing unstructured and complex language in reviews. By integrating machine learning and natural language processing techniques, the project focuses on accurately performing sentiment analysis and categorizing feedback into relevant topics, providing actionable insights for app developers to enhance app quality and user satisfaction.